AI detection tools for academic integrity use textual pattern analysis, stylometric scoring, and machine learning to flag AI-generated student submissions. The best tools for academic environments in 2026 combine high accuracy on fully AI-generated text (85–95%) with low false positive rates, LMS integration, and transparent reporting. No single tool is perfect — especially for mixed human-AI content or non-native English writers — so most institutions pair detection software with human review.

Key Takeaways

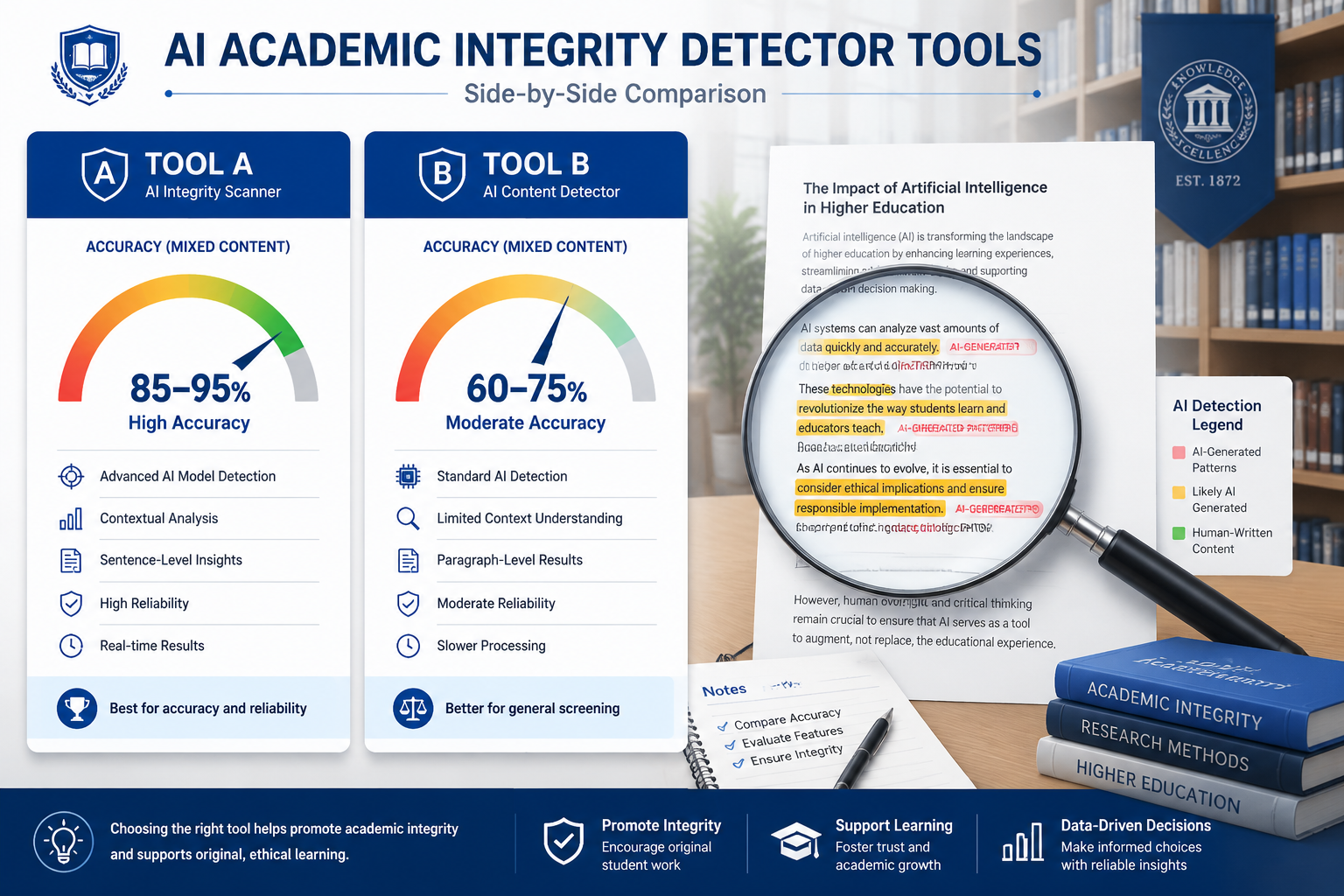

- Detection accuracy varies widely: Tools reach 85–95% accuracy on fully AI-generated text, but drop to 60–75% on mixed human-AI content [1]

- False positives are a real risk, particularly for non-native English speakers and students with certain learning differences [1]

- Free AI detectors show inconsistent results across different writing styles and submission types [4]

- Modern tools go beyond simple plagiarism checks — they use semantic analysis, behavioral analytics, and linguistic fingerprinting to catch paraphrased and translated AI content [1]

- The University of California system combined multiple tools with human review, achieving a 40% reduction in confirmed integrity violations and a 25% increase in faculty confidence [1]

- Process-tracking tools (which monitor how a document was written) are emerging as a strong complement to score-based detectors [4]

- Biometric and blockchain verification are moving from pilot programs into wider institutional adoption in 2026 [1]

- No detector should be the sole basis for academic penalties — institutional policies must account for tool limitations

- Proofademic AI and Paperpal are among the tools built specifically for academic contexts, with features designed for educators

What Is AI for Academic Integrity: Detector Tools Compared Actually Measuring?

AI detection tools analyze text to determine whether it was written by a human or generated by an AI model. They do this by examining statistical patterns that differ between human and machine writing.

Specifically, modern academic AI detectors look for:

- Perplexity scores: How predictable or “surprising” the word choices are. AI text tends to be statistically predictable [1]

- Burstiness: Human writing varies in sentence length and complexity; AI output is more uniform [1]

- Stylometric fingerprints: Unique patterns tied to specific AI models based on their training data [1]

- Semantic consistency: Whether ideas flow in the structured, almost-too-clean way that large language models tend to produce [3]

“Detection systems use statistical analysis to identify AI-generated content by examining consistent sentence length, predictable word choices, and lack of personal voice.” — Evelyn Learning [1]

These tools are not plagiarism checkers in the traditional sense. They don’t match text against a database of known sources. Instead, they score the likelihood that a piece of text was machine-generated. That distinction matters when interpreting results.

How Accurate Are AI Detectors in Academic Settings?

The honest answer: accurate enough to flag, not accurate enough to convict. Detection tools perform well on fully AI-generated text but struggle with edited or hybrid submissions.

Here’s what the evidence shows:

| Content Type | Typical Accuracy Range |

|---|---|

| Fully AI-generated text | 85–95% [1] |

| Mixed human-AI content | 60–75% [1] |

| Heavily edited AI text | Below 60% (estimated) |

| Non-native English writing | Higher false positive risk [1] |

The accuracy drop for mixed content is the most important number for educators to understand. Students who use AI to draft and then rewrite substantially will often slip past detectors. That’s not a flaw unique to one tool — it’s a fundamental limitation of pattern-based detection.

Common mistake: Treating a high AI-probability score as proof of cheating. A score of 80% means the tool is fairly confident, not certain. Human review is still essential before any disciplinary action.

AI for Academic Integrity: Detector Tools Compared — The Main Contenders

Several tools have positioned themselves specifically for academic use in 2026. Here’s how the main options break down.

Proofademic AI

Proofademic AI is built specifically for academic integrity workflows. It combines AI detection with plagiarism checking and offers detailed reports that educators can use in review conversations with students. Its academic focus means the interface and reporting language are designed for faculty, not just general content teams.

Best for: Institutions that want a dedicated academic tool with educator-friendly reporting.

Paperpal AI Detector

Paperpal’s AI detector is integrated into a broader academic writing platform. It’s particularly useful for institutions that want to support legitimate AI-assisted writing while still flagging fully generated submissions. The tool provides sentence-level highlighting, which helps reviewers see exactly which passages triggered the detection.

Best for: Institutions balancing AI policy enforcement with support for ethical AI use in writing.

Pangram AI

Our Pangram AI review found it to be one of the more accurate general-purpose detectors, with the platform claiming high detection rates in testing. It uses a combination of linguistic analysis and model-specific fingerprinting.

Best for: Educators who want a high-sensitivity detector and are comfortable managing false positive follow-up.

ContentDetect.ai

ContentDetect.ai offers a free tier, making it accessible for smaller institutions or individual instructors. However, free AI detectors as a category show mixed results, particularly with non-native English submissions [4]. It’s a reasonable starting point but not a replacement for a dedicated academic tool.

Best for: Instructors who need a free option for occasional checks, not institutional-scale deployment.

WasItAIGenerated

WasItAIGenerated supports multiple file formats, which is useful for institutions receiving submissions in different formats. It provides a probability score with a breakdown of flagged sections.

Best for: Instructors handling diverse submission formats who need a flexible detection option.

AICheckerPro

AICheckerPro positions itself as a comprehensive detection solution with batch processing capabilities. For departments handling high submission volumes, batch processing is a practical advantage.

Best for: High-volume academic environments where checking submissions one at a time isn’t practical.

Quick Comparison Table

| Tool | Academic Focus | Free Tier | Sentence-Level Detail | LMS Integration |

|---|---|---|---|---|

| Proofademic AI | ✅ Yes | Limited | ✅ Yes | Check Vendor |

| Paperpal | ✅ Yes | ✅ Yes | ✅ Yes | Partial |

| Pangram AI | Partial | Limited | ✅ Yes | No |

| ContentDetect.ai | General | ✅ Yes | Partial | No |

| WasItAIGenerated | General | Limited | ✅ Yes | No |

| AICheckerPro | General | Limited | ✅ Yes | Check Vendor |

What Are the Biggest Limitations of AI Detection Tools?

The core limitation is false positives, and they fall unevenly on certain student populations. Non-native English speakers and students with some learning differences are flagged at higher rates than native speakers writing in a similar style [1].

Other key limitations:

- Evasion is possible. Students who know how detectors work can edit AI output enough to reduce scores significantly [4]

- Mixed content is hard to catch. A student who uses AI for an outline and writes the essay themselves may score low on AI probability [1]

- Short texts are unreliable. Most tools need at least 250–300 words to produce meaningful scores

- Rapidly evolving AI models mean detectors are always somewhat behind the latest generation of text generators [2]

- No universal standard exists for what score threshold justifies concern, let alone action

“Free AI detectors show mixed results with particular inconsistency across different writing styles and non-native English submissions.” — Kritik [4]

Edge case to watch: A student with a highly formal, structured writing style (common in some academic traditions) may consistently score higher on AI probability even when writing entirely their own work. This is why institutional policy should require human review before any accusation is made.

How Do Institutions Use AI for Academic Integrity: Detector Tools Compared in Practice?

The most effective institutional approaches combine multiple tools with human judgment, not a single detector used in isolation. The University of California system’s experience is instructive: by pairing multiple AI detection tools with structured human review processes, they achieved a 40% reduction in confirmed academic integrity violations and a 25% increase in faculty confidence in assessment security [1].

A practical institutional workflow looks like this:

- Set a clear AI use policy before deploying any detection tool — students need to know what’s permitted

- Run submissions through a primary detector (ideally one with academic-specific features)

- Flag submissions above a defined threshold for human review, not automatic action

- Review flagged submissions in context — consider the student’s history, writing style, and the assignment type

- Conduct a follow-up conversation with the student before any formal process begins

- Document everything — detection scores, human review notes, and the outcome

Process-tracking tools add another layer here. These tools monitor how a document was written — tracking edits, time spent, and writing patterns — rather than just analyzing the final text [4]. They shift the focus from “did AI write this?” to “how was this work developed?” That’s a more defensible basis for academic integrity decisions.

What Emerging Technologies Are Changing Academic AI Detection?

Beyond text scoring, three technologies are reshaping how institutions verify academic work in 2026.

1. Biometric authentication during assessments Keystroke dynamics, gaze tracking, and voice recognition are becoming more accessible for online exam environments. These tools verify that the person submitting work is the same person who completed it [1].

2. Blockchain-based verification Several institutions are piloting cryptographically secure transcript and submission records. These create tamper-proof evidence of when work was submitted and in what form [1]. It doesn’t detect AI use directly, but it closes off some contract cheating pathways.

3. Behavioral analytics Some platforms now track writing behavior patterns over time, building a baseline for each student. A submission that deviates sharply from a student’s established patterns triggers review — regardless of what a text-based detector says [1].

These approaches are complementary to text-based detection, not replacements for it. The most forward-looking institutions are treating academic integrity as a multi-layer verification problem rather than a single-tool solution.

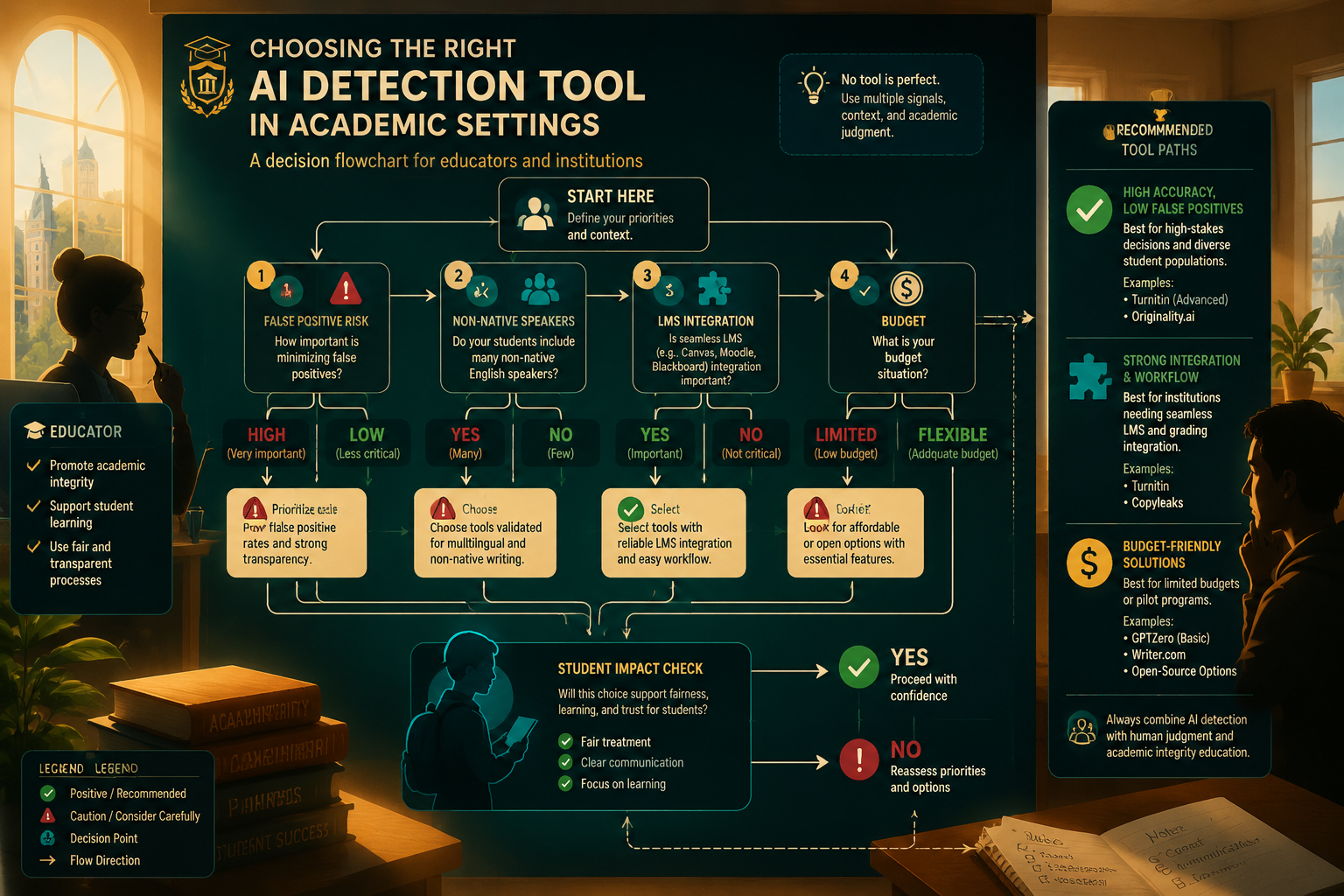

How Should Educators Choose Between These Tools?

Choose based on your specific context: submission volume, student population, institutional policy, and budget. Here’s a practical decision guide:

- Choose Proofademic AI if you want a tool built specifically for academic integrity with educator-focused reporting and you’re willing to pay for a dedicated solution

- Choose Paperpal if your institution also wants to support ethical AI-assisted writing and needs sentence-level feedback for student conversations

- Choose Pangram AI if you prioritize detection sensitivity and have a process for handling false positive follow-up

- Choose ContentDetect.ai if budget is the primary constraint and you need occasional checks rather than systematic screening

- Choose AICheckerPro if you’re processing high submission volumes and need batch capabilities

What to check before committing to any tool:

- Does it integrate with your LMS (Canvas, Blackboard, Moodle)?

- What’s the false positive rate on non-native English text?

- Does it provide sentence-level detail or just an overall score?

- How does the vendor handle data privacy for student submissions?

- Is the pricing per-check, per-user, or institutional?

FAQ: AI Detection Tools for Academic Integrity

Q: Can students fool AI detectors by editing AI-generated text? Yes. Significant human editing — especially rewriting sentences and adding personal voice — can reduce AI probability scores substantially. Detection accuracy for heavily edited AI content is estimated below 60% [1].

Q: Is a high AI detection score proof of cheating? No. It’s a signal that warrants review, not evidence of wrongdoing. Institutional policy should require human judgment before any formal action [7].

Q: Are free AI detectors good enough for academic use? For occasional, low-stakes checks, they can be useful. For systematic institutional use, free tools show too much inconsistency — especially with non-native English submissions — to be reliable [4].

Q: Do AI detectors work on languages other than English? Some tools include cross-language detection, but accuracy is generally lower for non-English submissions. Always check vendor documentation for specific language support [1].

Q: What’s the difference between an AI detector and a plagiarism checker? A plagiarism checker matches text against known sources. An AI detector analyzes statistical patterns in the text itself to estimate whether a human or AI wrote it. They measure different things and are best used together [3].

Q: Can AI detectors identify which AI model was used? Some tools use linguistic fingerprinting to identify patterns associated with specific models (GPT-4, Claude, Gemini, etc.), but this capability is imprecise and should not be used as definitive evidence [1].

Q: How long does text need to be for reliable detection? Most tools need at least 250–300 words for meaningful results. Shorter texts produce unreliable scores.

Q: Should institutions use AI detection as their only integrity measure? No. The most effective approaches combine detection tools with human review, clear AI use policies, process-tracking tools, and assessment design that makes AI shortcuts less useful [4].

Q: Do AI detectors flag non-native English speakers unfairly? Research indicates higher false positive rates for non-native English speakers [1]. This is a documented limitation that institutions must account for in their review processes.

Q: What should happen after a submission is flagged? The flagged submission should go to human review. If concern remains, a follow-up conversation with the student is the appropriate next step — not automatic disciplinary action.

Conclusion: What to Do Next

The field of AI for academic integrity — and the detector tools compared here — is moving fast, but the fundamentals are clear. No single tool is accurate enough to use as a standalone judge of student work. The institutions getting this right are combining multiple detection approaches with strong human review processes and clear, communicated policies.

Actionable next steps:

- Audit your current AI policy — if you don’t have one, write one before deploying any detection tool

- Test 2–3 tools on a sample of known submissions (human-written and AI-generated) to see how they perform with your student population

- Read the detailed reviews of Proofademic AI and Paperpal to compare academic-specific features

- Build a human review step into your workflow — make it mandatory before any accusation

- Consider process-tracking tools as a complement to text-based detection

- Revisit your assessment design — assignments that require personal reflection, live demonstration, or iterative drafts are harder to complete with AI alone

The goal isn’t to catch every student who uses AI. It’s to maintain the value of academic credentials and support genuine learning. The right tools, used thoughtfully, help with both.

References

[1] The Cheating Evolution: How Advanced AI Detection Tools Are Reshaping Academic Integrity and Creating Smarter Assessment Strategies in 2024 – https://www.evelynlearning.com/blog/the-cheating-evolution-how-advanced-ai-detection-tools-are-reshaping-academic-integrity-and-creating-smarter-assessment-strategies-in-2024

[2] How AI Document Detection Tools Enhance Academic Integrity in 2026 – https://vertu.com/ai-tools/how-ai-document-detection-tools-enhance-academic-integrity-in-2026/

[3] How AI Plagiarism Detection Is Changing Academic Integrity – https://www.edusageai.com/blogs/how-ai-plagiarism-detection-is-changing-academic-integrity

[4] Why AI Detection Fails and What to Do Instead: AI Detector Alternatives – https://www.kritik.io/blog-post/why-ai-detection-fails-and-what-to-do-instead—ai-detector-alternatives

[7] Detection Software – https://academictech.uchicago.edu/detection-software/